The artificial intelligence tools that defined the last three years were built on a set of assumptions that describe the daily reality of a small minority: stable broadband, unlimited data, and frictionless international payment rails. For everyone else, these baseline conditions represent a structural barrier to entry. While cloud-based AI requires a constant tether to high-end servers, Google’s release of Gemma 4 E2B on April 2, 2026, signals a pivot toward offline agency.

This goes beyond a simple version release. For the first time, the smarts of the model no longer depends on the user being connected.

This transition follows a period of significant friction for Google’s AI ambitions. Last year, the company was forced to pull an earlier iteration of Gemma after it was found to be fabricating data, including criminal allegations against public figures. This stumble raised valid questions about the reliability of “open” models. Gemma 4 is the redemption arc for that failure, focusing not on raw scale, but on the technical logic of where and how a model operates.

On an iPhone 17 Pro, this model processes roughly 40 tokens per second; even on an older iPhone 11, it maintains a functional rate of 20 to 35.

Why on-device AI is possible now

The timing of this release is not accidental. It is the result of two factors converging: the ubiquity of high-performance neural engines in mobile chips and a breakthrough in per-layer embedding architecture. This engineering allows Gemma 4 to compress its memory footprint aggressively without the proportional drop in quality that usually plagues small models. The E2B variant (Effective 2 Billion) fits into a 1.5GB RAM footprint at four-bit quantisation, meaning it can run on the mid-range hardware already found in many pockets across Lagos or Nairobi.

Unlike Gemini, which exists as a service within Google’s cloud, Gemma consists of open weights that a user downloads and owns. This shift removes the need for subscriptions, API calls, or server pings. Under the Apache 2.0 license, a developer can incorporate this intelligence into a commercial app and charge for it without owing Google a percentage of the revenue or complying with restrictive cloud-usage policies. For a developer in a volatile economy, this provides a level of cost predictability that cloud-based APIs cannot match.

The redistribution of access

In Nigeria, where mobile internet speeds often sit below 20 Mbps and prepaid data plans make every query a financial decision, the offline capability of Gemma 4 is a redistribution of access. Traditional AI tools like ChatGPT Plus or Claude Pro require $20 monthly subscriptions denominated in dollars—a significant barrier given current currency fluctuations and international payment restrictions.

By removing the query cost and the data dependency, the model creates immediate utility in high-friction environments:

- The Marketplace: A trader can photograph a supplier’s price list and receive an instant, offline comparison or summary without using a single kilobyte of data.

- The Classroom: A teacher in a location with throttled Wi-Fi can scan handwritten student work to generate immediate feedback.

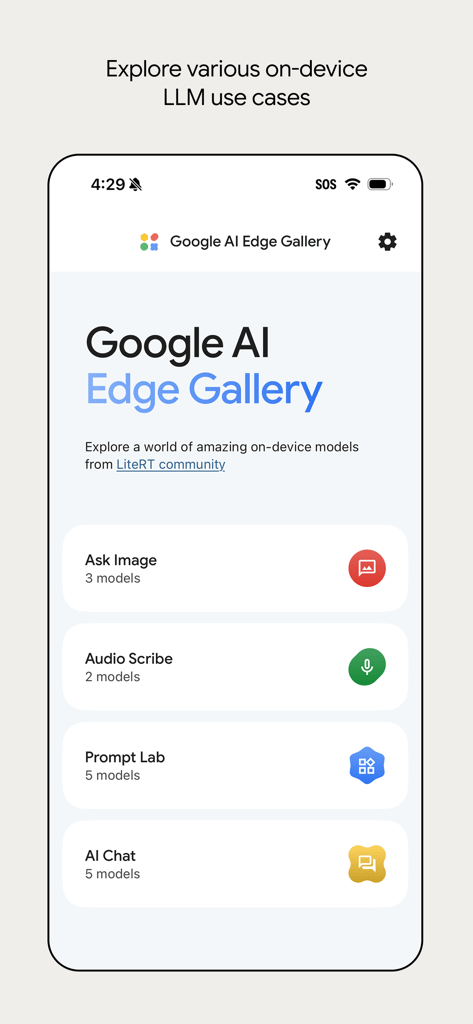

- The Journalist: A reporter can transcribe audio via the “Audio Scribe” feature during a power cut or in transit.

This local processing also addresses a material privacy concern. For sensitive work involving legal, medical, or financial data, the guarantee that a prompt never leaves the device is an essential safeguard, not an abstract preference.

Furthermore, because the model lives on the device, researchers can fine-tune it on Yoruba, Igbo, or Hausa datasets without routing the data through a foreign cloud provider that may not prioritise local language nuances.

Deployment realities and the Android roadmap

The barrier to using this technology has been significantly lowered through the AI Edge Gallery app, which handles the model download and execution without requiring technical knowledge of sideloading or token authentication. For Android users, the move is particularly strategic: code written for Gemma 4 today will be compatible with Gemini Nano 4, the embedded AI layer arriving in Android devices later this year.

However, the operational bounds of the model remain real. While it excels at summarisation, image analysis, and document review, it cannot match the deep, multi-step reasoning of the largest cloud models.

Battery consumption is also a factor; local inference is a processor-intensive task that will drain a device faster than standard applications. Additionally, the model’s internal knowledge is currently fixed to a January 2025 cutoff, though features like the “Agent Skills” Wikipedia integration offer a workaround for factual queries.

The bigger picture

The centralisation of AI has always carried the risk of excluding users whose infrastructure did not meet the baseline assumptions of Western tech hubs. Gemma 4 E2B does not solve every hardware gap, but it changes the trajectory. It demonstrates that as models become more efficient, they require less borrowed infrastructure to remain useful.

We are moving toward a future where the conditions that describe most of the world—intermittent power, high data costs, and fluctuating connectivity—no longer dictate who gets to use the world’s most advanced tools.

Read also: Google’s Antigravity and the race to let AI code for you

Get passive updates on African tech & startups

View and choose the stories to interact with on our WhatsApp Channel

ExploreLast updated: April 16, 2026